What Is the Edge? What Are Origin Servers?

Introduction

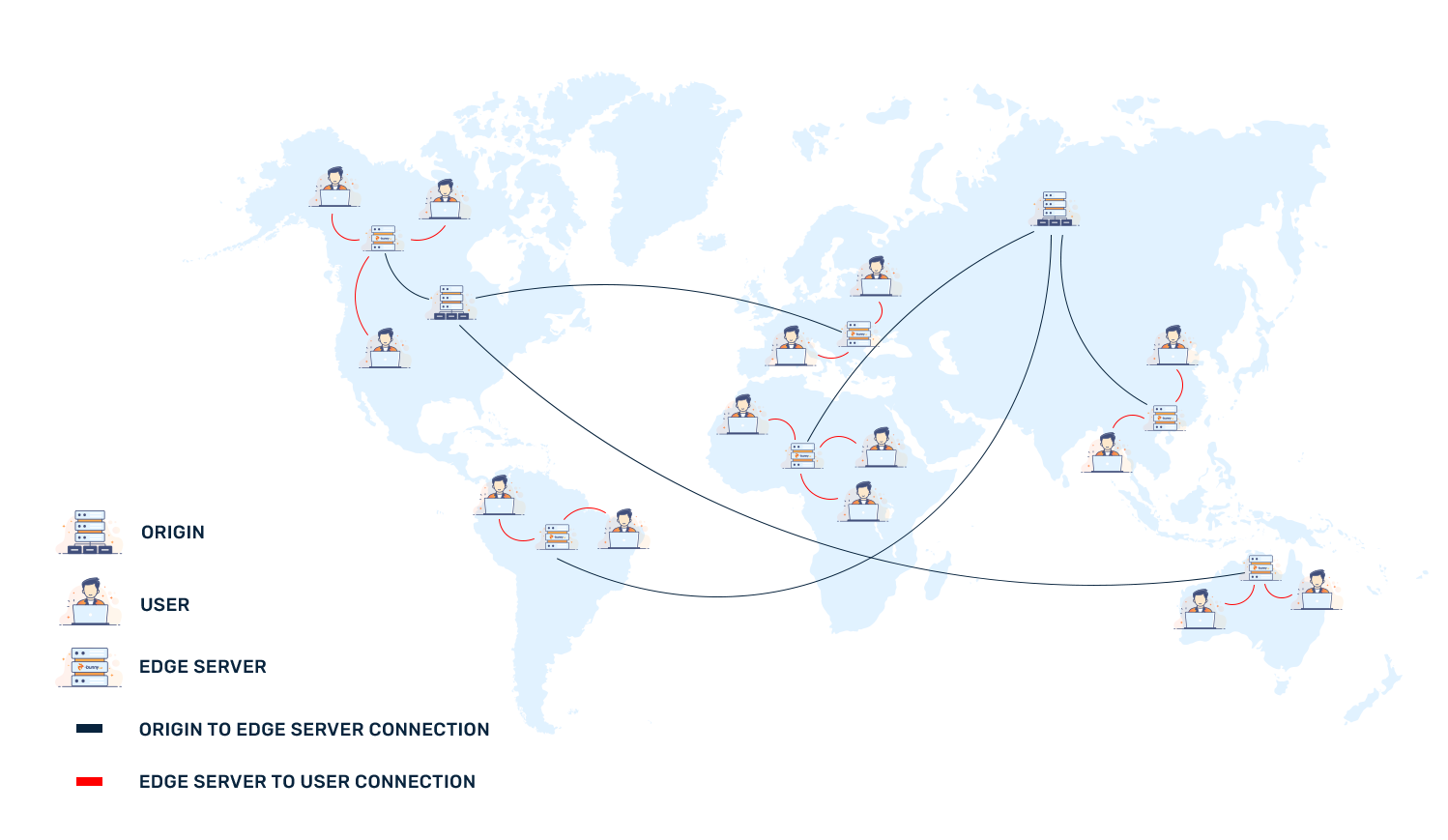

Content Delivery Networks can seem complicated, but they should be accessible to everyone. As such, it is crucial to understand what CDNs do, and how a network of many servers effectively increase performance from an origin server.

Origin Servers

To begin, an origin server is where your content originates from. Whether you’re requesting the HTML file to your website, some cat picture, or an hour long video, every Content Delivery Network (CDN) has to pull content from somewhere. This does not need to be a single server. Some important technologies that are offered to origin servers include failover monitoring and load balancing to dynamically “switch” your origin servers (where the “edge” communicates) around to manage load or even scheduled downtime on a particular server.

In principle, origin servers don’t have to be a customer’s direct server — it can be a central cache or even other edge servers. In essence, an origin server can be any source for a website’s information.

Edge Servers

Once content has been pulled from an origin server, data flows to what is called the “Edge.” The difference between an “origin” an “edge” is that origins are often placed in a single location. “Edge servers” are placed globally and content can be cached close to where users are. This lowers subsequent load times for content for an improved user experience. With stale cache and origin IP shielding, CDNs also offer an important feature to webmasters: the ability to remain online, even during origin issues or a DDoS attack.

Note: Locally stored caches on the “edge” are volatile. Content that hasn’t been accessed in a while can be replaced with more frequently accessed content (potentially from another customer or user).

One final advantage with globally distributed edge servers (i.e. servers that are around the world, close to users) is resilience. Along with intelligent routing and Anycast networks, traffic can be spread out and malicious traffic mitigated, protecting the origin from excessive bandwidth costs and improving performance worldwide.

Edge Computing and WAF

The popularity of “edge” computing has increased in recent years. Users often do not want to pay large amounts of money to run short compute tasks on large servers around the world. They now have the option to use compute resources close to where users are, on demand, for a fixed price. Lower latency per request (as servers are geographically closer to end-users) increases user satisfaction and powerful edge computing can deliver real-time experiences while avoiding the need to send requests across the globe and back.

One final thing that can be performed on an edge server is running a Web Application Firewall (WAF). Whether you want to block XSS attacks or prevent excessive requests, a WAF at the edge can provide the low latency users desire while allowing for granular rules to be applied to meet any specific website’s requirements. For example, constant attacks to a WordPress “admin” page can likely be blocked with a single rule to reduce load on an origin (where your WP site is running) and keep the origin up, allowing for other legitimate traffic to continue through.