Last week, we announced our most extensive network expansion yet, but it was just a piece of a larger goal. In 2022, we set out to build the fastest network in the world. Finally, one month into 2023, we made it happen, opening up an incredible start for the Year of the Bunny!

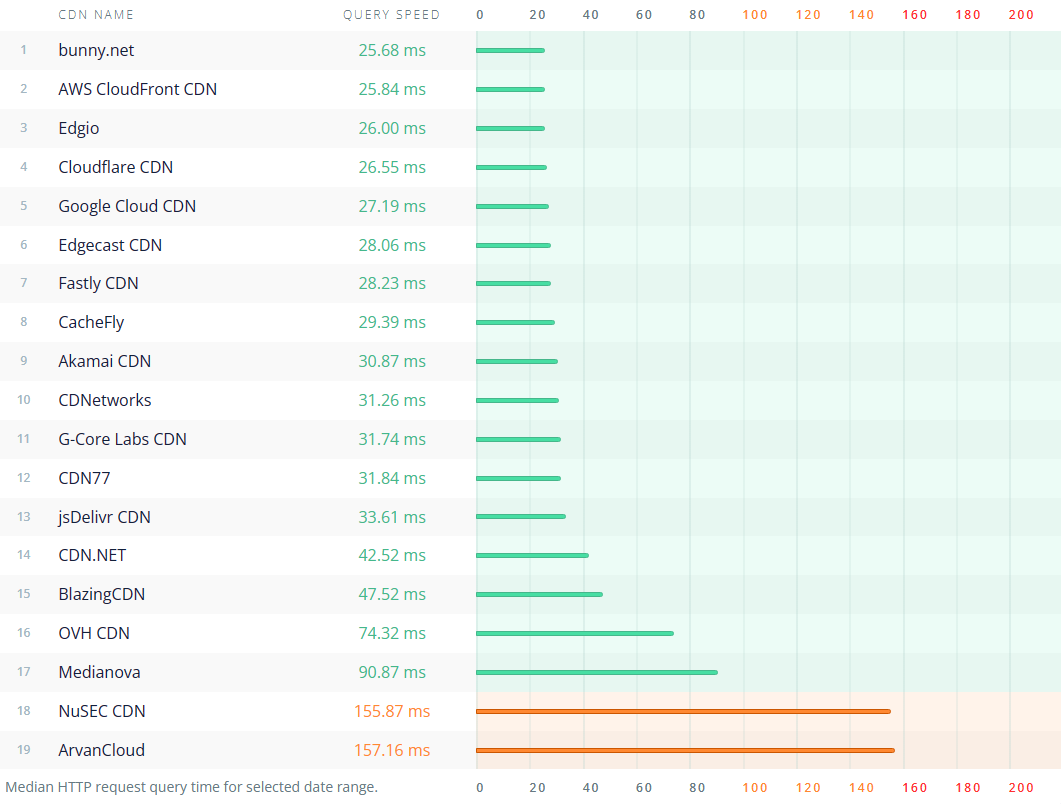

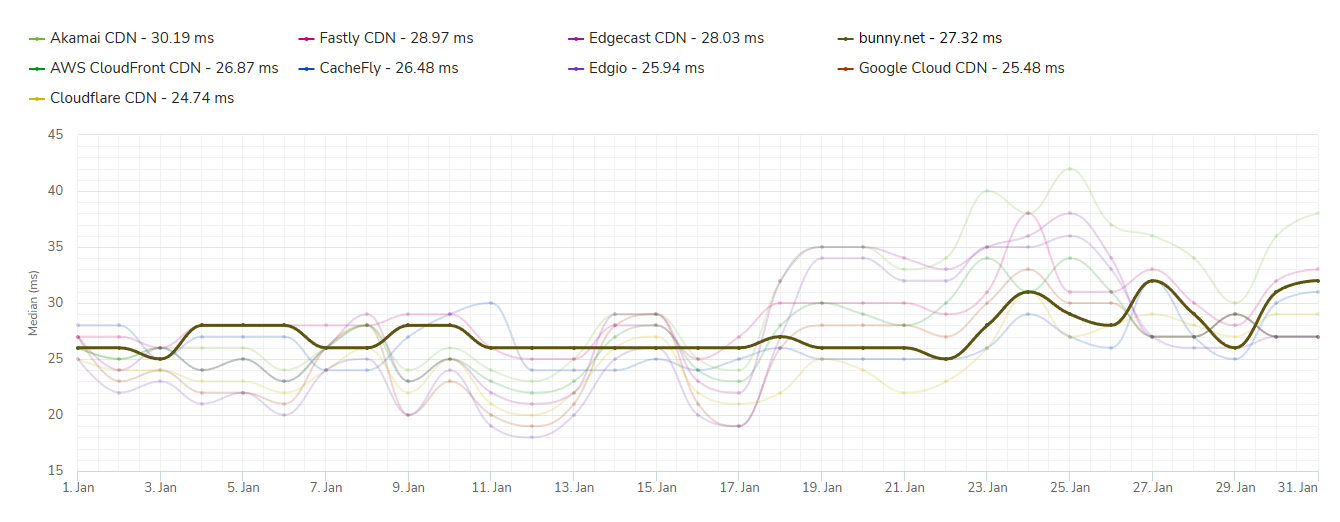

Today, we're thrilled to announce that in January, CDNPerf, an independent CDN performance monitoring service provided by PerfOps, ranked bunny.net as the fastest CDN platform for global delivery. Based on billions of real-world user tests worldwide, we can confirm that bunny.net is indeed hopping ahead of the pack.

When we started bunny.net, our goal was to offer a cost-effective CDN solution. Through time, that evolved into a mission to help build a faster internet. Now, we combine the best of both worlds. bunny.net users can now leverage the world's lowest average latency network at some of the most competitive price points available on the market.

Performance by region

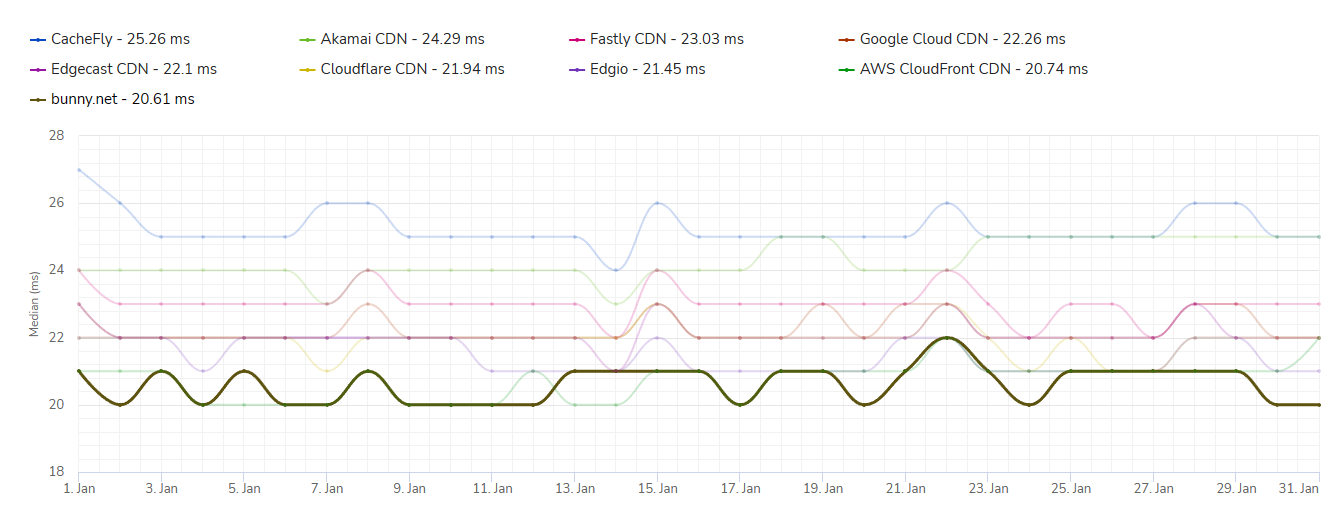

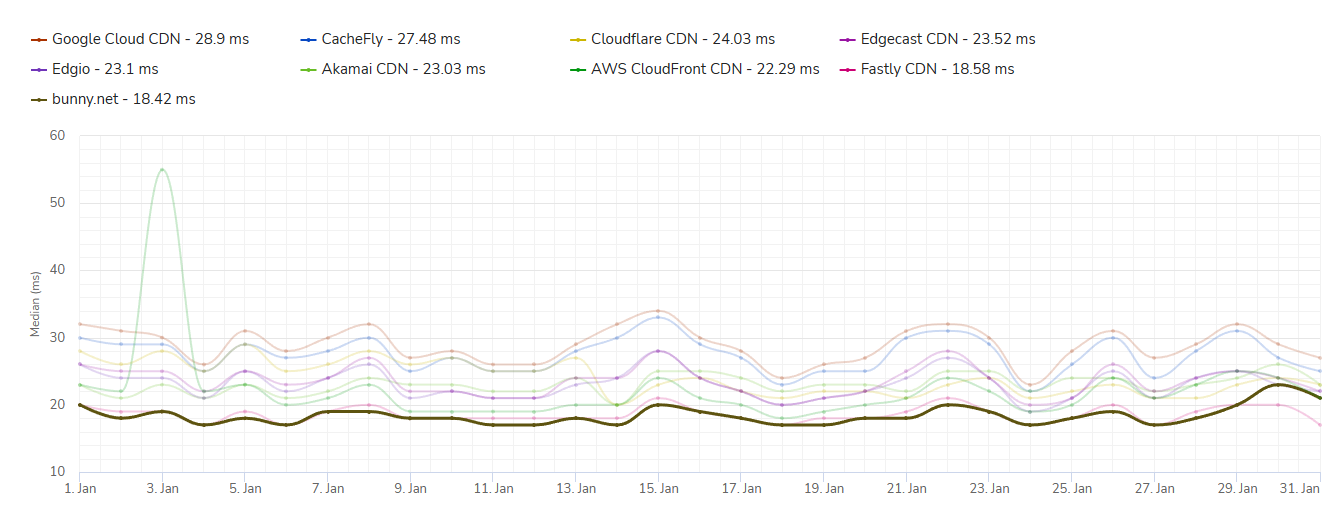

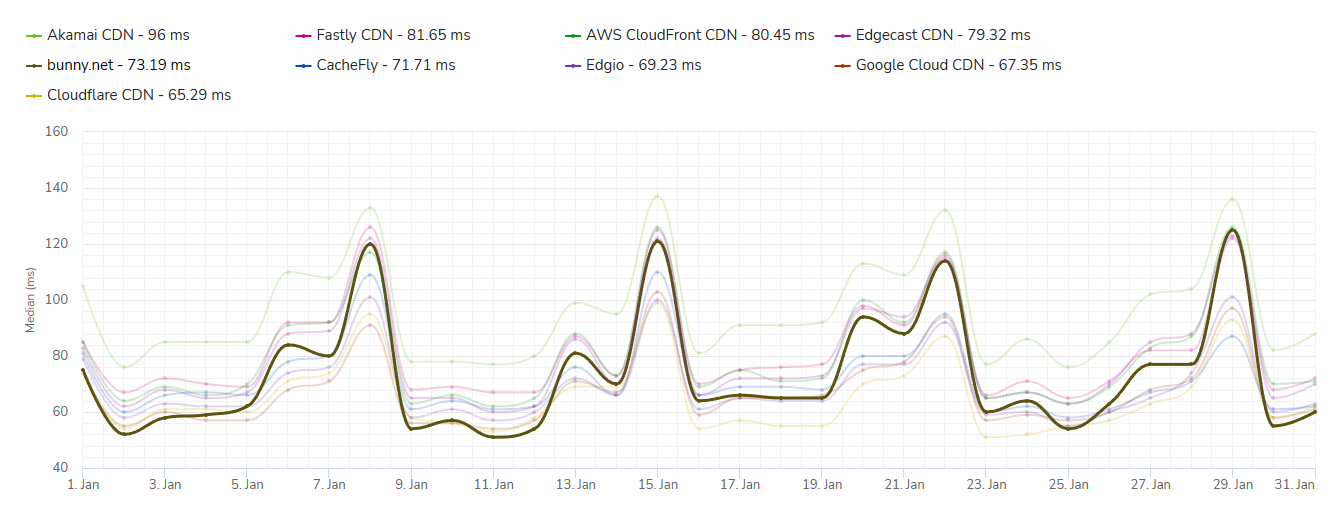

On top of our ranking as the fastest CDN on a global average, bunny.net also won the race in most regions around the world. We helped over a million websites hop noticeably faster than the rest throughout Europe, Asia, Oceania, and Africa.

How did we get there?

Earlier this week, we announced our most significant network expansion yet, which helps us save the world up to 9 years' worth of time daily. It was an incredible feat for a single year and a huge step towards global performance.

However, this was just one piece of the puzzle. Today, we wanted to share more details on how we worked throughout '22 to make your content hop faster than ever.

Network Expansion

The biggest contributor to pushing forward network performance was the expansion itself. Throughout 2022, we introduced 43 new PoPs in over 35 countries worldwide. This alone brought your content just milliseconds away from over a billion users around the globe.

With coverage in over 114 cities around the world, bunny.net now runs one of the most extensive global networks.

From consumer SSD to enterprise NVMe drives

The next piece of the puzzle is the hardware we run on. A few years ago, most major providers, including Bunny CDN, bragged about using SSD storage to power edge networks.

However, when every millisecond matters, even SSDs can quickly become the bottleneck, and we wanted to go faster. Throughout 2022, we started the process of migrating most of our global network to cutting-edge, enterprise-grade NVMe drives.

This way, we can squeeze every last bit of performance out of our hardware with disk latencies as low as 300 microseconds and almost ten times higher IOPS and throughput.

To illustrate this better, here is a comparison between our standard old hardware stack compared to the new NVMe one.

Expanding our anycast network

Thanks to our SmartHop routing engine, we route requests to the most optimal destination on the map with a mix of GeoDNS, anycast, latency-based routing, and even the makeup of your content library. We account for these to ensure exceptional performance in all regions worldwide, making it easier to interconnect such a massive global footprint.

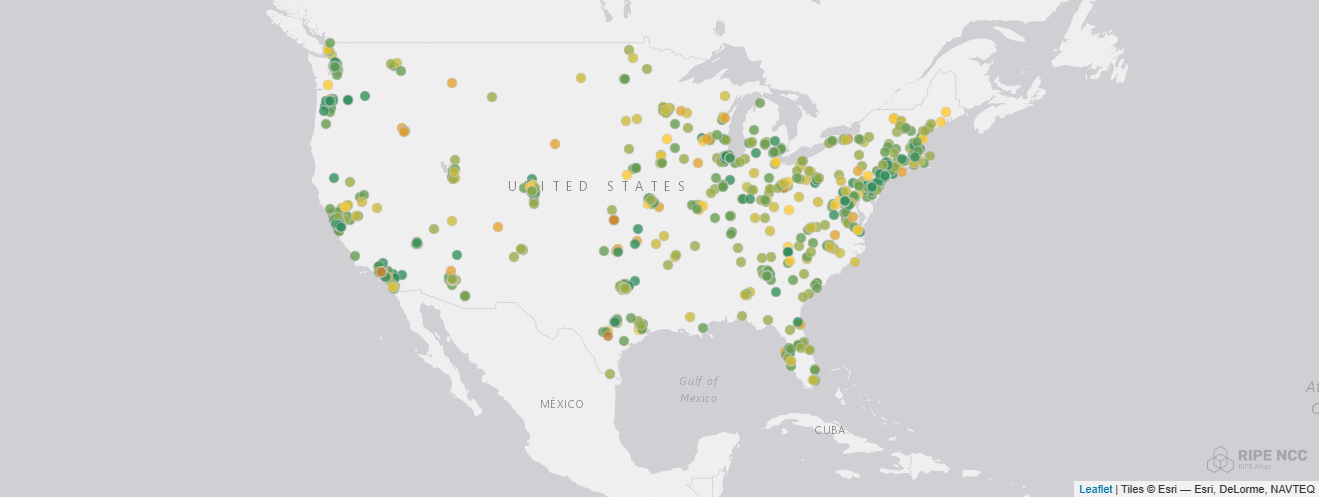

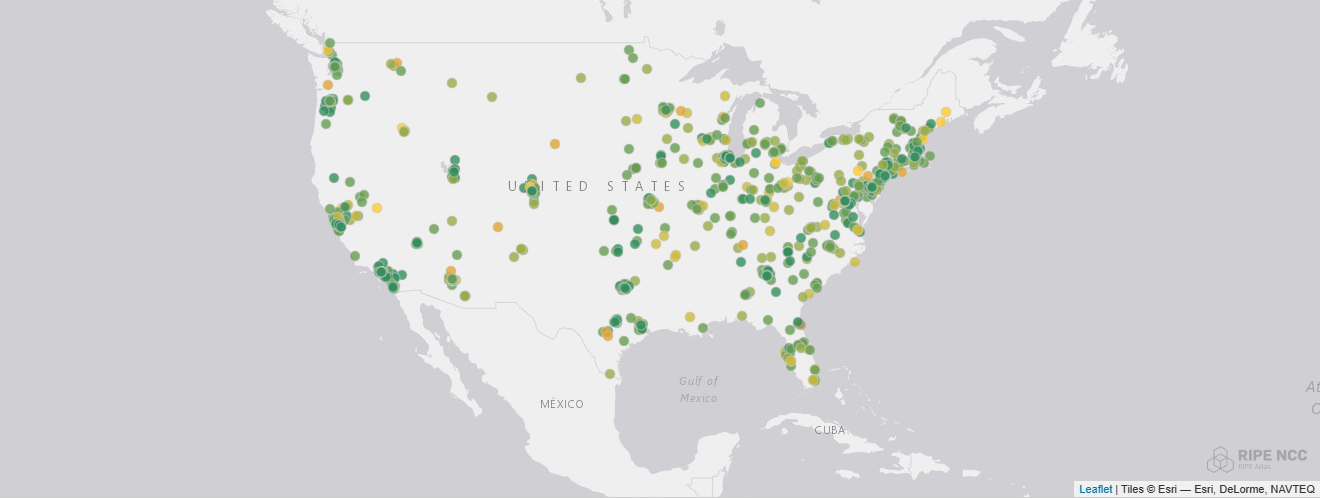

In the past, we relied significantly more on GeoDNS and latency-based routing, which worked incredibly well with a single PoP per country. However, as we continued expanding within the United States and Australia, GeoDNS did not keep up to speed. So last year, we expanded our anycast within multiple regions, including the US, India, and Australia, where we have presence in multiple cities within a country.

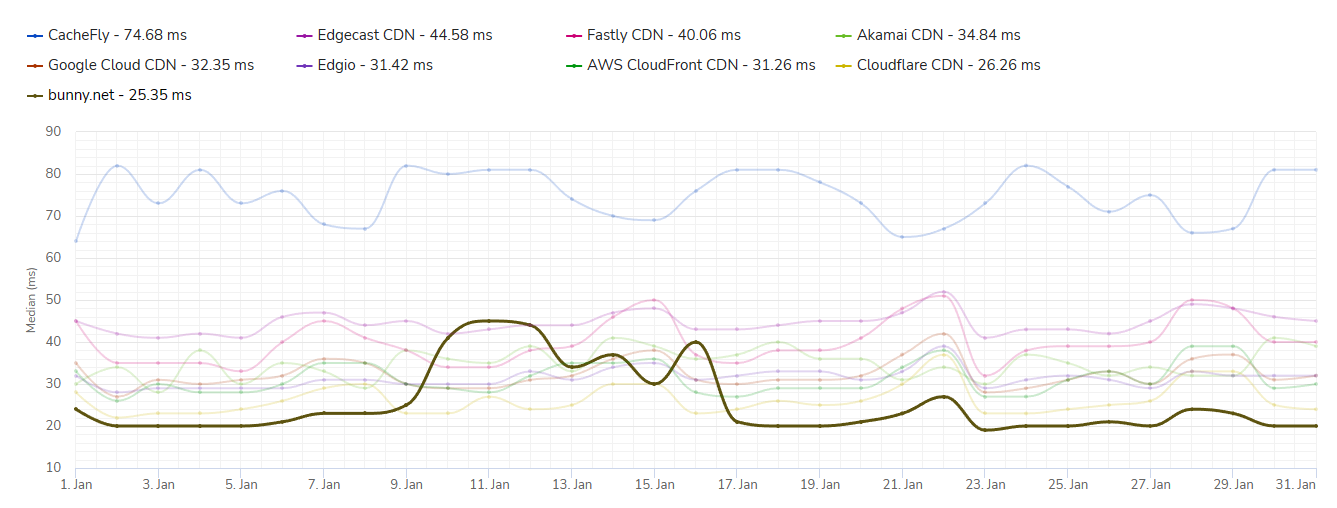

To best illustrate this, here is the latency comparison of the old GeoDNS routing engine compared to the new, ultra-fast anycast network within the United States.

The new anycast network brings over 75% of the 1000 test nodes within 20 ms, compared to 59% of the old one. Additionally, bunny.net users can now enjoy a reduction of the median latency within the US of over 20% and a significantly reduced variance.

Finally, we also expanded our routing engine to support specific ASN rules and overrides. Together, the software and network interplay allows us to do super granular decisions for every request to make sure it reaches the most optimal destination.

Hardware & software optimizations

As part of the network overhaul, our infrastructure team has been diligently testing, tweaking, and improving both our hardware and software stacks. From disk schedulers, to OS and NIC settings, we've carefully fine-tuned every part of our stack to squeeze out every last bit of performance.

On the software side, we completely reengineered how we handle IO and configuration caching, from using async file open in Nginx, to smart configuration reloading. Instead of reading and writing cache files, our system now directly signals an updated configuration file to Nginx to reduce unnecessary file loading. Combining these two factors results in a reduction in tens of thousands of file operations per second and a significantly reduced load on the Nginx processing event loop.

Overall, this allowed us to reduce problematic 99th-percentile latency spikes by as much as 90% in some regions.

Still room to hop even faster!

Of course, internet networks are a complex web of interconnections. While we've hopping ahead in performance in most regions around the world today, we believe there is always room to go even faster and plenty of regions where we can continue to improve!

We also understand CDNPerf might be just one of the metrics to measure performance, so we're continuing with one single focus: to go even faster!

Going forward, we will continue to aggressively pursue our mission, and continue to try and move performance to the next level. We will also be putting significant additional focus to both North and South America, and start exploring further expansion routes within Africa to continue building a better experience for the internet.

Help us make the internet hop faster!

At bunny.net, we are on an ambitious mission to help make the internet hop faster. If you are passionate about networking, technology, and performance, make sure to check out our Careers Page. We have many different positions open and would love to have you onboard.