What is Video Transcoding?

Introduction

Transcoding means to convert information from one form to another. In the context of video transcoding, we can change the container and format of video files. For example, you might want to convert a video formatted in Google's VP9 to H.264 to play better on lower-end devices.

With the rising popularity of streaming services and platforms like Twitch and YouTube, you might want to know how content is delivered to such a wide range of devices. Whether you're watching a movie on Netflix or a cat video on YouTube, most of the time, you're watching content that was converted from another source.

Video transcoding is an essential process in the video delivery pipeline. It's actually required to make certain types of content streamable. Using a powerful server, or hardware encoder, videos can be converted to all kinds of formats and bitrates to serve as many devices as possible.

How transcoding is used

Transcoding makes media content playable on a wide variety of devices. It also offers additional options when media is re-encoded. For example, content can be compressed even further, which is useful for mobile and lower-end devices that have a limited amount of bandwidth to stream content with.

The audio inside of a video container is also converted. For example, 320 Kbps audio might be excessive if you use Bluetooth headphones, so a content delivery network might reduce the bitrate of the audio in an MP4 to 128 Kbps to improve performance.

What's the difference between transcoding and encoding?

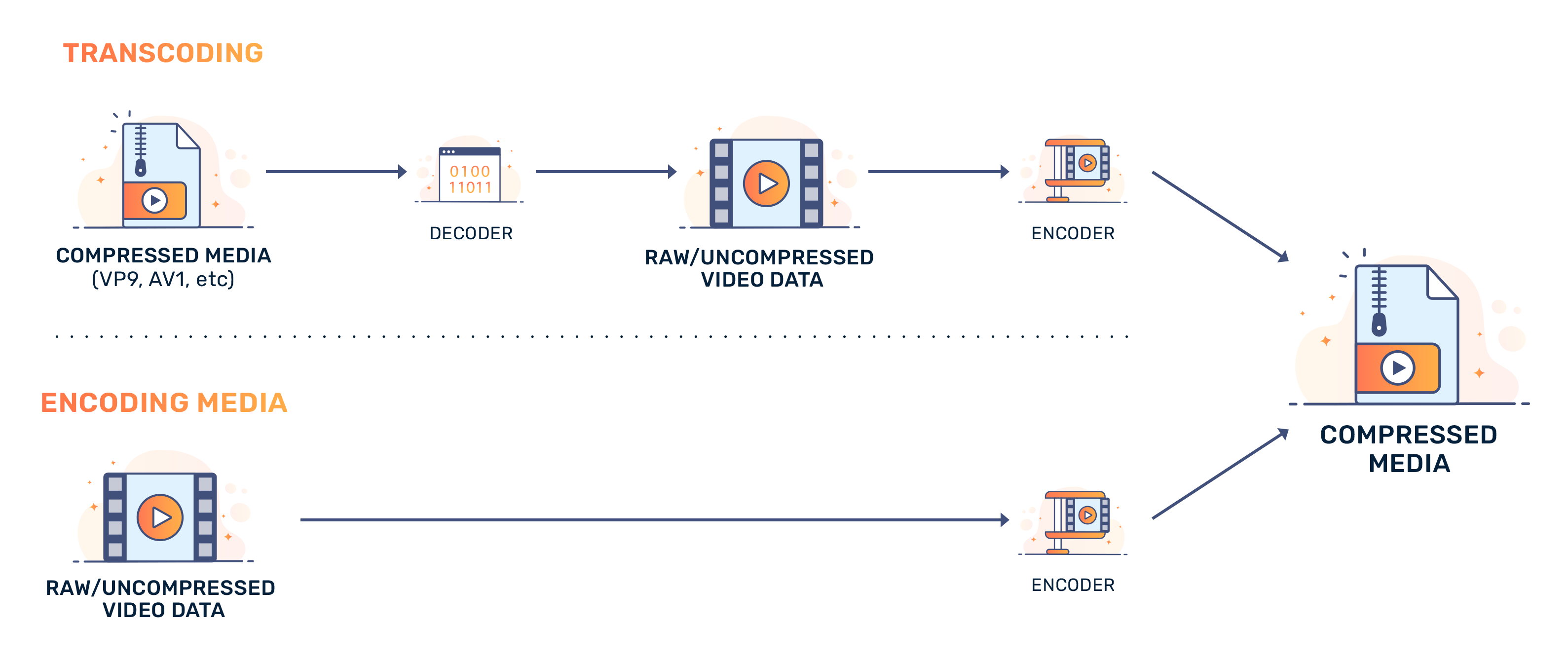

Videos can originate from a point-and-shoot camera on your phone or a high-quality video editor. No matter where the content originates, all video data is coded. In order to convert video into a usable format, it is encoded, or converted from uncompressed data to compressed data.

When video content is transcoded, it is converted from one format to another. While all video format is encoded, transcoding specifically describes the conversion of compressed media to another format.

Transcoding software

There are a variety of software that can transcode media. For example, FFMPEG. This open-source project supports a wide variety of hardware-accelerated appliances and graphics SOCs (like NVENC, or VCE) and has software-only modes when hardware acceleration isn't available or desirable. FFMPEG also supports a broad range of media codecs, which can help if you want to transcode a VP9 video into HEVC/H.265 to target iOS devices, or convert AV1 content to H.264 for lower-end devices.

Due to losses in hardware encoders, many people only use hardware acceleration for the decode stage of a video transcode and opt for software encoding in the final stages of transcoding. Software-based encoding tends to be lossless.